Since the late-2000’s there has been an explosion of non-relational (NoSQL if you must) data persistence technology. The industry buzz has focused around the derivatives of the seminal work done at Google – i.e., BigTable – and Amazon – i.e., Dynamo.

We’ve seen massive adoption of simple document stores and key-value based stores – which focus on availability and partition tolerance and thereby enable storage and processing of schema-less (or semi-structured) data at velocities and volumes previously considered entirely impractical.

There were – however – compromises. These systems are abysmal at dealing with connections between the data – or more precisely connecting the entities in the data sets with one another in a variety of contexts.

Many of our platforms, systems, applications and services are intended to deal with these types of connections – and unfortunately most engineering teams fall back to relational databases to solve these problems. The problem with this approach, however, is that relational databases are inherently inefficient when performing complex set operations:

The true value of the graph approach becomes evident when one performs searches that are more than one level deep. For instance, consider a search for users who have “subscribers” (a table linking users to other users) in the “311” area code. In this case a relational database has to first look for all the users with an area code in “311”, then look in the subscribers table for any of those users, and then finally look in the users table to retrieve the matching users. In comparison, a graph database would look for all the users in “311”, then follow the back-links through the subscriber relationship to find the subscriber users. This avoids several searches, lookups and the memory involved in holding all of the temporary data from multiple records needed to construct the output. Technically, this sort of lookup is completed in O(log(n)) + O(1) time, that is, roughly relative to the logarithm of the size of the data. In comparison, the relational version would be multiple O(log(n)) lookups plus additional time to join all the data.[3]

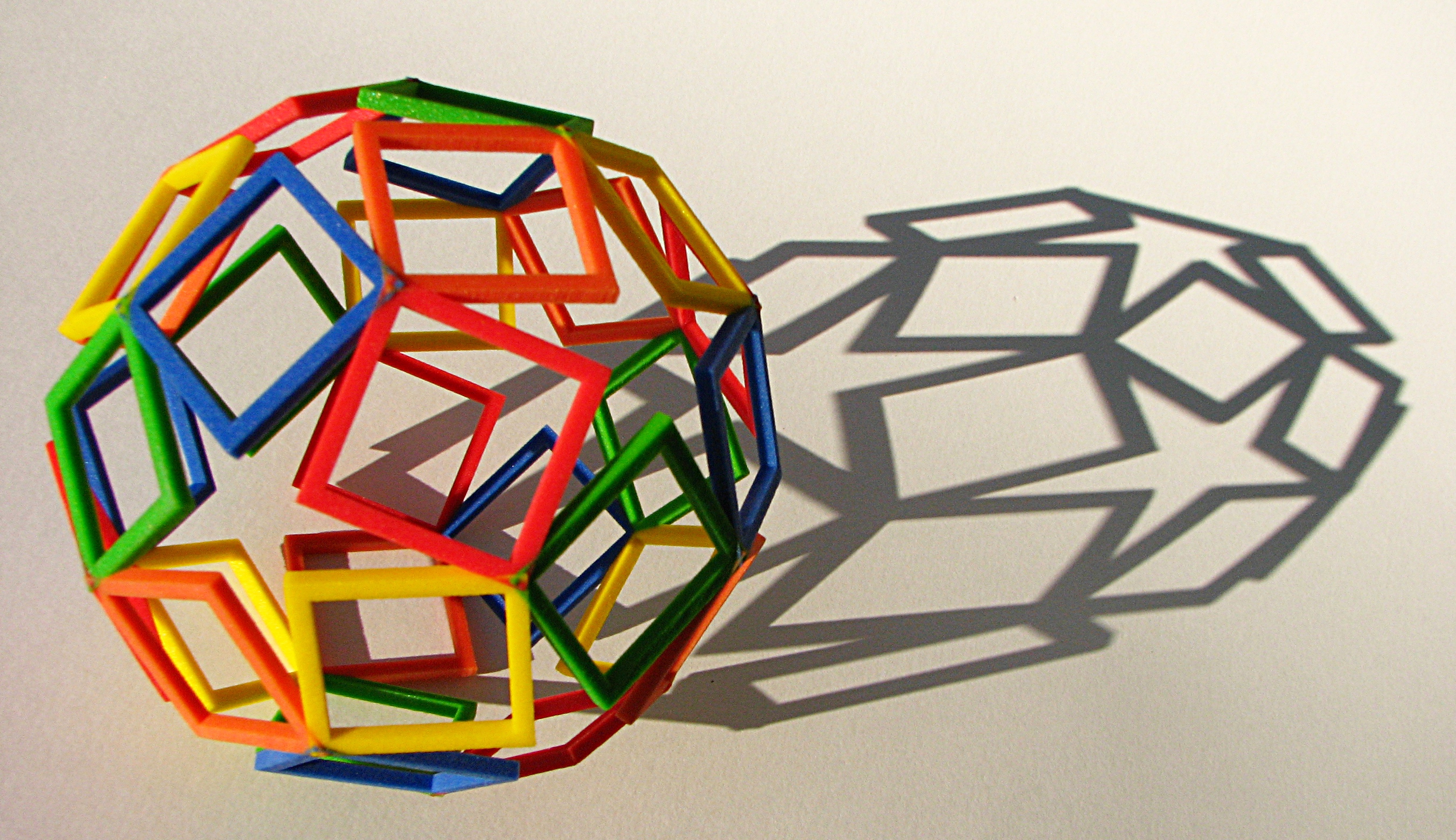

Via Wikipedia

The opportunity to build highly efficient systems by leveraging natural graph models (those that exist in the real world) is massive and dramatically under utilized.

Imagine a CRM system which wasn’t tightly coupled to a rigid account, contact, entitlement hierarchy model. Imagine an student roster system which directly modeled the complexity of sections for teachers, schools, districts and states without a myriad of join tables.

The only barrier is investing in education required to understand the efficiencies of graphs and the graph databases available today. I highly recommend you up your game by downloading Neo4j today and beginning to learn the advantages graph databases can provide in your polyglot persistence architectures, and if you need any help let me know – I’m happy to help you and your team model your data and leverage a graph to simply your system and increase your feature velocity.